There is been a fair bit of chatter on the blogosphere lately about the perennial problem of low quality chemical data, e.g. chemical structures that do not adequately describe the material they claim to represent, messed up names, broken references, mistaken or underspecified accompanying data, etc.

This latest round of discussion seems to have been triggered by the recent publication of the so-called NCGC Pharmaceutical Collection, which not only claims to be definitive, but may in fact be unusually bad, such that even practitioners who have no illusions about the quality of data in general use are noticeably unimpressed (e.g. ChemConnector, In The Pipeline). While I haven’t personally examined the offending dataset, this is as good an occasion as any to weigh in with my long-held opinion on how things have gotten so bad that chemical data wranglers routinely expect between 5 and 20% of their source data to be junk.

My take on the matter is this: the data is bad because crucial parts of it are thrown away.

By that I mean the information existed, and was correct, but some of it was discarded, and now the database that you are trying to browse, search, import, or use to train a QSAR model, has a double-digit failure rate.

Most chemical data originates from scientists. I mean real data, not computed virtual data; the kind that can be traced back to some number of experiments, and at some point, a chemist with expertise in the subject drew a conclusion, and decided that the information was worthy of being submitted to a peer-reviewed journal. The point is not that this hypothetical scientist was necessarily right about this fact, but rather that the process of communicating the fact was most probably performed correctly.

Whether this hypothetical scientist is a grad student who made a new compound, or a professor, or an industrial chemist, or a chemical catalog vendor, the point is that the originators of the data have both the means and the incentive to do a good job of representing the information that they are trying to present to the rest of the scientific community, and that this close to the source, there are safeguards: e.g. peer review for researchers, or customer complaints for vendors. These safeguards are strong enough to ensure that care is taken, and while good old fashioned mistakes do happen, they are quite infrequent.

Given that this is where most useful data comes from, where does it go wrong?

The short answer is: software.

There has been much discussion of various kinds of transcription errors that cause problems for compiled databases of chemical structures and their auxiliary data, but maybe not quite enough that goes back to the root cause. The way things are done currently ensures that the process of compiling chemical structure/property databases is more or less doomed from the outset.

The two codependent problems that I want to address are:

- software that chemists use to digitalise their data often uses file formats that are not capable of describing all of the essential data that the chemist is trying to communicate

- most chemists use software as a pen-and-paper equivalent, with the intention of communicating with other chemists

The blame for both of these problems I assign entirely to producers of chemical information software, and none of it to the chemists who use that software. I’ve worn both of these hats, but lately mainly the first category, and I regard it as my job and moral duty to do my best to get the software right; chemists have other things to concern themselves with, like getting the science right. We got the easy part.

So let me illustrate some examples where data originates from a chemist, who had the data quality issue entirely under control, but got transformed into junk sometime before winding up in some structure/property database.

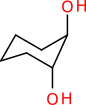

Consider a structure representation of 1,2-cyclohexanediol:

The structure has two atom stereocentres, but no wedge bonds are used. What does the chemist mean by this? Maybe the mixture is racemic, maybe the stereochemistry is unknown, maybe for the reaction being described it makes no difference, or maybe the lack of wedge bonds is, by way of mutual understanding, a way of signifying that it is the meso form. Whatever it was that the chemist intended to convey, it is likely that when the structure is represented digitally, e.g. as a ChemDraw sketch, an MDL MOL file or a SMILES string, the meaning will be discarded, and replaced with an assumption (i.e. unknown).

The structure has two atom stereocentres, but no wedge bonds are used. What does the chemist mean by this? Maybe the mixture is racemic, maybe the stereochemistry is unknown, maybe for the reaction being described it makes no difference, or maybe the lack of wedge bonds is, by way of mutual understanding, a way of signifying that it is the meso form. Whatever it was that the chemist intended to convey, it is likely that when the structure is represented digitally, e.g. as a ChemDraw sketch, an MDL MOL file or a SMILES string, the meaning will be discarded, and replaced with an assumption (i.e. unknown).

Or maybe the structure shown above was not what the chemist drew at all – maybe the original lab notes had it drawn like this:

To a chemist, this chair form clearly indicates trans stereochemistry, but to a software algorithm it is exceedingly difficult to reliably guess 3D clues from these kinds of wedgeless drawings. If it went through SMILES as an intermediate, or was redepicted for some other reason, chances are this human-oriented information has been lost permanently.

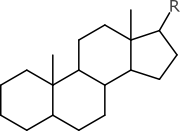

Or what if you saw this structure:

If you happen to be a steroid chemist, you probably won’t be slowed down too much by the total absence of stereochemistry labels. The original context probably makes it clear that it’s the normal kind, and that the wedgeless representation is a kind of shorthand. But unless a parsing algorithm has been specially forewarned to expect this particular example, and assured that the “non-normal” kinds are never underspecified in this way, then the digital representation will probably just assume that it is racemic or unknown, which is not the case. Information has been lost for lack of context.

If you happen to be a steroid chemist, you probably won’t be slowed down too much by the total absence of stereochemistry labels. The original context probably makes it clear that it’s the normal kind, and that the wedgeless representation is a kind of shorthand. But unless a parsing algorithm has been specially forewarned to expect this particular example, and assured that the “non-normal” kinds are never underspecified in this way, then the digital representation will probably just assume that it is racemic or unknown, which is not the case. Information has been lost for lack of context.

My personal favourite examples of structure data loss involve non-druglike structures, for which few if any of the currently popular structure formats are capable of representing adequately. Consider somebody curating a published chemical reaction that involved the use of tin(II) chloride, by drawing it with a sketcher:

Without proper control over implicit hydrogen atoms, and without a separate field to manually provide the molecular formula as accompanying data, many cheminformatics algorithms will incorrectly guess the formula as H2Cl2Sn. If somebody decides to “improve” the database by automatically computing the molecular formula and molecular weight, there is no way to know whether the two extra hydrogen atoms should be added or not, and so the database will now be contaminated with a guess. And of course it’s an unnecessary failure, because the chemist who published the paper, and most likely the person who curated it later, knew perfectly well whether it was tin(II) and not tin(IV).

Without proper control over implicit hydrogen atoms, and without a separate field to manually provide the molecular formula as accompanying data, many cheminformatics algorithms will incorrectly guess the formula as H2Cl2Sn. If somebody decides to “improve” the database by automatically computing the molecular formula and molecular weight, there is no way to know whether the two extra hydrogen atoms should be added or not, and so the database will now be contaminated with a guess. And of course it’s an unnecessary failure, because the chemist who published the paper, and most likely the person who curated it later, knew perfectly well whether it was tin(II) and not tin(IV).

There are all kinds of other things that go wrong with inorganic structures. While most large chemical database collections are mainly full of the types of organic structures used by the pharmaceutical industry, those that step off the top right segment of the main group are more important than many people realise, and their failure rate with common structure encodings is abysmal.

Consider the hydrogen-suppressed style representation of a palladium ammonia complex:

Most chemists draw dative bonds as a single line, which is redundant with a covalent single bond, which is a problem because it breaks the valence-counting rules that are required to reliably calculate implicit hydrogen atoms, among other things. The use of the same bond type for the Pd-Cl bond (which is formally covalent) and the Pd-N bond (which is a 2-electron ligand donor) is throwing away information. Information that can easily be recreated in the minds of other inorganic chemists, but not by a software algorithm, which is likely to conclude that the ligands are actually NH2 rather than NH3, which is wrong, and unnecessarily so.

Most chemists draw dative bonds as a single line, which is redundant with a covalent single bond, which is a problem because it breaks the valence-counting rules that are required to reliably calculate implicit hydrogen atoms, among other things. The use of the same bond type for the Pd-Cl bond (which is formally covalent) and the Pd-N bond (which is a 2-electron ligand donor) is throwing away information. Information that can easily be recreated in the minds of other inorganic chemists, but not by a software algorithm, which is likely to conclude that the ligands are actually NH2 rather than NH3, which is wrong, and unnecessarily so.

It gets worse, not better. Most chemists like to draw ferrocene like this:

It is quite possible to draw it exactly this way using semi-cheminformatic drawing packages like ChemDraw, but this is terrible for an algorithm. A parser attempting to understand what is meant by this would see a connected component consisting of an iron atom connected to two terminal carbon atoms, i.e. C2H6Fe, two more connected components that look like cyclopentane, i.e. C5H10, and two ellipses, which are arbitrary drawing objects with no cheminformatic meaning whatsoever. This is definitely nothing like the ferrocene molecule that the chemist intended. It is an example of the gulf that separates the cheminformatician’s need to have a digitally descriptive definition of a distinct molecular species, and a chemist’s need to transfer information from one human brain to another human brain.

It is quite possible to draw it exactly this way using semi-cheminformatic drawing packages like ChemDraw, but this is terrible for an algorithm. A parser attempting to understand what is meant by this would see a connected component consisting of an iron atom connected to two terminal carbon atoms, i.e. C2H6Fe, two more connected components that look like cyclopentane, i.e. C5H10, and two ellipses, which are arbitrary drawing objects with no cheminformatic meaning whatsoever. This is definitely nothing like the ferrocene molecule that the chemist intended. It is an example of the gulf that separates the cheminformatician’s need to have a digitally descriptive definition of a distinct molecular species, and a chemist’s need to transfer information from one human brain to another human brain.

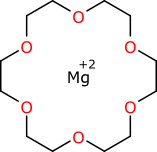

Sometimes the weird-bond problem is circumvented by just leaving them out:

But this is just yet another example of throwing away good data. If the chemist was explicitly stating that the magnesium ion is chelated by all six of the oxygen atoms of the crown ether, then this structure representation no longer does an adequate job of capturing the chemistry.

But this is just yet another example of throwing away good data. If the chemist was explicitly stating that the magnesium ion is chelated by all six of the oxygen atoms of the crown ether, then this structure representation no longer does an adequate job of capturing the chemistry.

Like most people, chemists are prone to laziness. Nobody likes to draw out parts of structures that are chemically uninteresting to the matter at hand, and so chemistry has thousands of common structure abbreviations that are relatively well agreed upon (e.g. Me, Et, Pr, Bu, tBu, Ph, Bz, etc.). But there are uncountable more that are only used within subdisciplines, or individual research groups, or by lone individuals. And they don’t always mean the same thing to the same people

Anyone who has to draw Wilkinson’s catalyst on a regular basis probably uses a notation like this:

where L stands in for triphenylphosphine, which is very tedious to draw in full. From one rhodium chemist to another, it is easy to forget that “L” is a generic abbreviation, and is often used for other ligands. If the above structure is encountered without context, a smart parser might guess triphenylphosphine and it might be right. But it is just as likely to be seriously wrong.

where L stands in for triphenylphosphine, which is very tedious to draw in full. From one rhodium chemist to another, it is easy to forget that “L” is a generic abbreviation, and is often used for other ligands. If the above structure is encountered without context, a smart parser might guess triphenylphosphine and it might be right. But it is just as likely to be seriously wrong.

The abbreviation issue is hardly limited to inorganic chemistry – that example is just author experience bias. There is no definitive standard for abbreviations. While “tBu” might be well enough understood, a chemist who likes to type “Bu(t)” instead may end up producing data that is unreadable. There is also the ancilliary problem of leaching nonspecific abbreviations like “X” or “R” slip into databases, without realising that they are supposed to be expanded out from a table of fragment definitions.

All the examples so far assume that a person is transcribing a chemist’s understanding of a structure directly into some kind of digital representation, albeit one that may be inadequate for the task. But there are also many efforts to digitise data automatically from its printed form, by OCR (Optical Character Recognition) algorithms. While technologically incredibly cool, this is a really bad idea for compiling reliable data. Accounting for a multitude of typographical variations makes the rest of these problems seem easy.

Is this 2-iodopropane, or a really bad drawing of 2-methylbutane? Very hard to tell, if you happen to be an algorithm.

Is this 2-iodopropane, or a really bad drawing of 2-methylbutane? Very hard to tell, if you happen to be an algorithm.

Data problems are not just limited to structures. As well as significant interest in mining old printouts for structure data via OCR, there is also a lot of interest in parsing chemical meaning from human-readable text. Some years ago I did some work on a project designed to pull out chemical tags from a recipe, such as chemical names, reaction conditions, times, quantities, etc. Chemists normally write synthetic procedures in a very constrained way, which makes it surprisingly easy to parse out maybe 80-90% of the key information and mark it up with semantic meaning. But this is also a great way to open the garbage floodgates. (Coming up with counterexamples for this one is a bit too easy, so I’ll leave this one as an unreferenced assertion – that’s one of the advantages that blog posts have over peer reviewed literature articles!)

Tabulated data is also troublesome, due to problems with inadequate data formats. When a chemist chooses to provide the results of a measurement in the form of a number, it is seldom as simple as a number and its units. Whether it is a boiling point or biological activity, there is always more to the story. So very often tabulated data are entered from literature publications and stored in a seriously defective database format, such as MDL SD file, and very often the data is almost unusable. For example, boiling point data might come in as any one of:

80 80C 80+/-2C >80 <40 ~75 approx. 75 75-85 75..85 76,81,83 85 deg C 350 K 100F nd n/a

I’ve seen all these and a lot more, for data claiming to be clean. When somebody builds a script to assimilate this kind of data into a more structured form, there is a lot of assuming and guessing going on. Needless to say the chemist who published the original data knew perfectly well what the units were, and whatever was meant by the special modifier codes and footnotes regarding special conditions. The amount of such data that is lost in the translation to digital is quite staggering.

So what is to be done about all of this?

The first step is for cheminformaticians – that’s us – to start pushing better data formats. Formats that can capture much more of the context of a chemist’s communication than those in general use today. This includes structure representations, and database formats – these are the highest priority, because they are so fundamental for cheminformatics. As long as we are using formats like MDL MOL and SMILES, and pretending that they are capable of describing the chemistry that we need to describe, or database schemata that do not use enough fields to capture the nuances of numerical data, then chemical databases will continue to be junk.

For a more rigorous representation of structure diagrams, I can recommend my own creation, the SketchEl molecule format, which is used by the open source SketchEl project and the Mobile Molecular DataSheet. It is designed to solve some of the problems mentioned in this post, and the recent extension to include inline abbreviations makes it all the more useful. It is minimalistic, extensible, and has a high degree of forward and backward compatibility. For numerical data, the issues are a bit less subtle, and the answer is: store the information, and don’t throw it away! If your script can’t parse it, that means you can’t use it.

It’s going to be awhile before we have good chemical databases. More recognition of the core problems, and attention focused on solving them, are long overdue.

When I was using Beilstein/Crossfire some years ago, I met plenty of problems with physical constants.

For one series of compounds, pKa values were listed as alpha-D values (or vice-versa, I don’t remember which way it was).

pKa used to be important for stucture determinations, but published values (presumably obtained by synthetic chemists) were notoriously unreliable and they still pollute the literature. More recently, there has been a tendency, at least in pharmaceutical industry documentation, to confuse calculated values (some of which are bizzarre) with experimental ones.

Ahh… Tony, I think you are now getting somewhere in directing your angst and (hopefully) realizing the true (primary?) issue of the source of errors in chemical databases that many of us have known about all along.

The chemists care. The database providers care. The cheminformatics file formats are a one size fits all (and they don’t “think” or “care”, as they are just file formats :-). They corrupt information. Think about how much stereo information is incorrectly added or lost just by misuse of the “chiral” flag in the MOL file CTAB.

It gets worse. Then come all the different algorithms, in so many different flavors, and of different chemical quality. Think about all the ambiguous stereo assignments made by individual software packages due to poor 2-D coordinate choices and incorrect stereo perception (stereo provided but now no stereo or vice-versa). Think about differences in algorithms between individual software vendors and open source software.

Cheminformatics is a bit of a mess.

I put “Tony” in the message above but meant “Alex”. (Sigh) Sorry about that…

I’m not going to disagree that software places limitations on how we represent chemical information, and that there are many cases where this is to the detriment of the data that can be produced.

But you cannot ignore that humans are just as involved in the process. Increasing the power/flexibility of chemical drawing packages allows users to generate better data (good), but it also means that there are more ways to generate a wider spread of depictions by either not using the new features properly or by misusing them (bad).

To illustrate I’ll give 2 examples:

1. Stereochemical wedges: Most chemical drawing packages expect that a stereochemical wedge is used so that the narrow end of the wedge is at the stereocentre and the bold or dashed representation describes whether this bond is in front or behind the plane of the page.

However, some users will use a wedge with the fat end at the stereocentre and getting narrower to show a bond going back into the page as they feel that the wedge should reflect perspective.

There are other less important cases where wedges are mis-used to add perspective or an aesthetic (eg. gem-dimethyl groups that are shown with one bold wedge and one dashed)

2. Diastereoisomers: Often where a pair of diastereoisomers is created (or transformed) a single isomer is drawn with the d.r. as a text label below. In this case I can see that it could be possible to encode something into a diagram so that the label is a property of the molecule. But even if this were done there is no guarantee users would not just add a free text label instead.

I think a key point that came through in your post was the subject of context. And I think this points to the fact that chemists need to start understanding that in a their schemes need to be able to be interpreted in their own right (ie, be self-contained).

For me the depiction of the steroid skeleton that you used is very powerful – I’m not a steroid chemist – I wouldn’t know what the ‘normal’ structure is even if you supplied that description (or similar) in the paper. And while in this case the meaning of ‘normal/typical/standard’ might be commonly accepted in this case, there might be other cases where this isn’t clear cut (7 stereocentres means that this could describe 128 different isomers). And if it is common for everyone to use such a (reduced information) depiction, they predominate and it becomes difficult to actually find the original (complete information) meaning.

I can’t see this example as anything other than case where the human is at fault – no software can fill in information that isn’t provided.

I’d identify 3 issues:

1. As chemists we need to assess if the way we depict structures at the moment is suitable in a computer age where semantic (meta)data is rapidly becoming more important (most of our conventions predate computers – do we now need to revise some of them?)

2. We need to have software (and file formats) that fit the needs identified in point 1

3. We need to disseminate good practice in structure depiction and an understanding of why it is important (most importantly beyond cheminfomaticians and information specialists to the chemist at the bench)

And this is all ignoring the whole other topic of names and identifiers which plagues databases (and the associated problems surrounding use of name-to-structure algorithms).

Christopher and Evan: the things that happen to chemical databases after the entries have been entered is a whole new series of horror stories – and the more they get documented, the better. I was trying to focus on my claim that data is basically already junk the moment it gets inserted into the stream, because the data formats are inadequate, and contemporary software does not do a good job of intermediating between what chemists want and what cheminformaticians need.

Dave: I agree 100% with everything in your comment. What we need is software that can bridge the gap: chemists need to be able to specify structures that are complete and correct from a cheminformatic point of view, but still be able to render them in an aesthetic form that is suitable for human-to-human communication. Most software accomplishes one or neither of these goals. I think it’s time for us software people to step up to the plate and make this happen. It would be nice if client software provided a guiding hand to make it easier for chemists to do this, but right now it’s not even possible, so there’s plenty of work to go around!

This is a great review of common problems in chemical structure encoding and communication. I am grateful that you are pointing these things out, since I frequently have made the experience that, when touching these and related subjects in conversation with both chemists and informaticians, the response is that these are “minor problems,” not worth spending too much time on (and to forget about getting funding therefore). But the opposite is a true. These are critical issues, which gain in significance with advanced research of complex chemical architectures (for example, metal-organic frameworks (MOF), self-assemblies, nanodevices structures), for which, I think, cheminformatics should and can offer researchers adequate tools: advanced cheminformatics for advanced materials design.

Since you mentioned the magnesium crown-ether complex, I cannot refrain from showing a possible CurlySMILES notation that encodes the magnesium ion being chelated by all six of the oxygen atoms of the crown ether:

[Mg+2]{+Lc=C1CO{!I}CCO{!I}CCO(!ICCO{!I}CCO{!I}CCO1{!I}}

CurlySMILES ( http://www.axeleratio.com/csm/proj/main.htm ) is an open format able to capture context of chemist’s and material scientist’s communication. CurlySMILES may help to reduce junk chemical data on the side of chemical composition, (supra)molecular and/or lattice structure. The quality and reliability of associated physical properties is yet another question.